Open-sourcing python-memtools: a memory analyzer for Python programs

Sometimes in the course of software engineering, difficult bugs arise that existing tools aren’t able to solve, or even shed much light on. These sometimes result in trial-and-error debugging, or abandoning the approach entirely and trying a different path, but sometimes neither of those options are suitable either. What’s an engineer to do?

In this article, we'll first provide background on why the standard tools didn't fit our needs. We will then recount the design of pbcc, focusing on how we achieved speed and simplicity. Finally, we'll outline the key takeaways from building a core infrastructure component from scratch.

To illustrate, we’ll tell a story of one such bug.

▶ The Situation

We have a Python script that generates data for one of our systems. Due to the nature of this data, the script runs for a long time - often multiple days. We found that sometimes the script would hang with 0% CPU usage, seemingly doing nothing at all, but not completing either. On the rare occasions when this happened, it would occur after over a day of runtime, and would cause us to have to throw out a significant amount of its output for that run. This script’s output is very important, so we needed to debug and fix this quickly!

One way to investigate could be to add a signal handler that finds all async tasks and prints them out. However, this would require restarting the process with a new version of the script, and the debugging cycle would be far too long for comfort - we may have to wait over a week for the bug to trigger again.

So, we need to see what’s happening in this process without restarting it. There are some existing tools that can do this sort of thing; for example, py-spy can show the stack traces of all threads in a process without interfering with the process (other than a short pause). But py-spy’s output just showed idle threads like this:

Thread 1923485 (idle): "MainThread"

select (selectors.py:469)

_run_once (asyncio/base_events.py:1871)

run_forever (asyncio/base_events.py:603)

run_until_complete (asyncio/base_events.py:636)

run (asyncio/runners.py:44)

<module> (generate.py:55)

It’s clear that either a service that this script depends on is very slow, or something is going wrong in async-land, but we don’t have visibility into what exactly is happening there. What we really need is a list of all the async tasks and their attributes, but we still need to get it without restarting the process.

▶ The solution

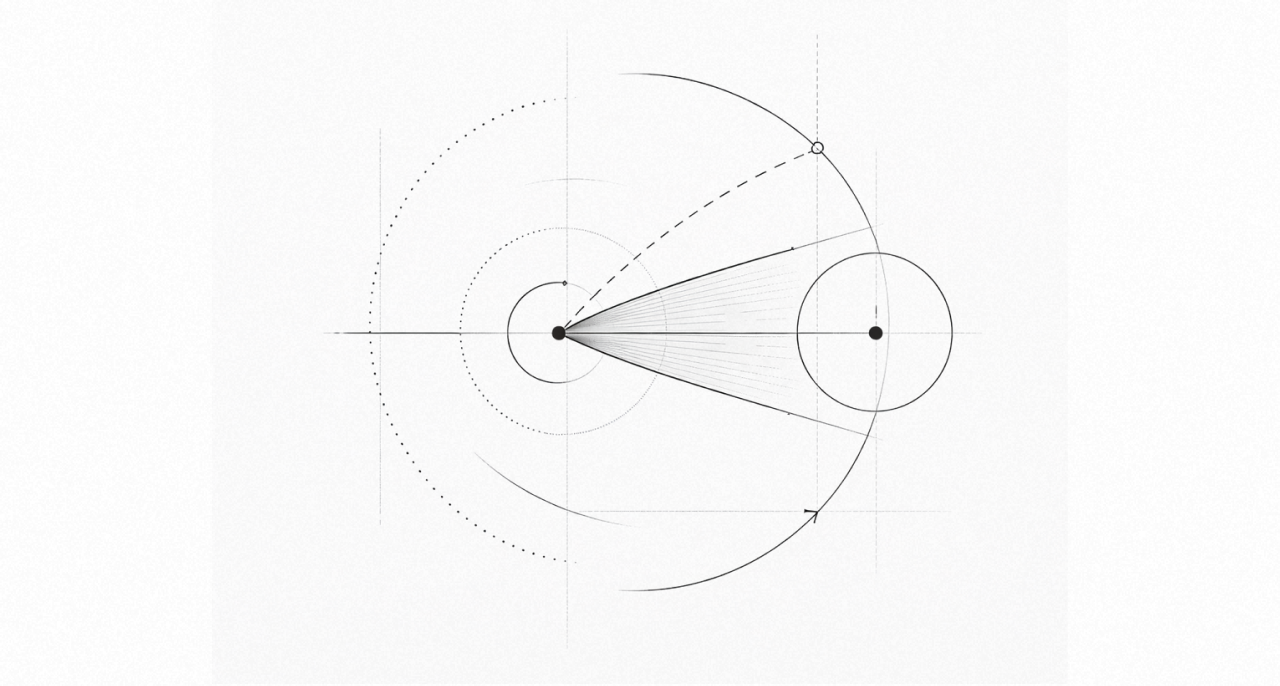

We built a memory analyzer that takes a snapshot of a Python process’ memory state, then finds all Python objects inside it using a few strong heuristics. This graph of objects can then be analyzed interactively to understand what’s going on inside the process.

The first step is to find the base type object by scanning the entire memory space in the snapshot. This object has a distinctive memory signature with a few unique features:

• Its type pointer points to itself, which shouldn't be the case for any other object.

• Its name pointer points to the string "type".

• It has many other pointer fields, each of which must either be null or point to a valid memory address.

These features are strong enough that there is always a single object that matches them - we haven’t yet seen any false positives, or failed to find the base type object in any snapshot.

Once python-memtools has found the base type object, it searches for other type objects by finding all valid type objects whose type pointer points to the base type object. After these two passes through the memory snapshot, we have a dictionary of all types and their addresses, which we can use to find instances of each of those types.

We implemented custom validators for all basic types (int, str, list, dict, etc.) so we could be sure when we’ve found valid objects of those types in memory, and not just data that looks kind of like valid objects. These validators also allow us to understand the contents of these objects (so we know which other objects they hold references to, if any) and format them in a way that looks like normal Python syntax.

Using python-memtools, understanding the above scenario became much easier. We used python-memtools to make a memory snapshot, then scanned for all instances of asyncio.Task in the snapshot and organized them into a forest of trees based on what they’re waiting on. (This is all implemented in a single command in python-memtools.)

This revealed the problem immediately: there is a cycle of async tasks waiting on each other! It turned out that in very rare cases, there could be a cycle in one of the input graphs processed by the data generation script, which we originally believed was impossible. Since the script runs a single async task per node and deduplicates them based on the node ID, there will be a cycle of async tasks waiting on each other if there is a cycle in one of the input graphs. With this realization, it was a simple matter to implement cycle detection in our algorithm, and we never saw it stall again.

▶ The conclusion

We’ve found python-memtools useful for solving other kinds of issues too. Here are a few of them:

• One of our in-house services had a bug that caused it to never respond to a client’s request, causing the client to hang forever. Using python-memtools, we could see the async task for that request, inspect its local variables, and find out exactly what that bad request was, which allowed us to easily reproduce and fix the bug on the server.

• Another of our in-house services had what looked like a memory leak - its memory usage would slowly increase over the course of a few days until it was eventually killed by the oom-killer and restarted. Using python-memtools, we were able to see what was taking up all the memory; it turned out to be unsent metrics data, which meant the metrics receiver was overloaded and falling behind. The socket stats on both sides of the connection confirmed this.

• We found a bad interaction between the gRPC client library and libuv, which manifested as a thread hanging forever. We discovered that this was due to a deadlock deep in the libuv implementation, which was triggered when a multithreaded Python process that had already imported both libuv and gRPC later created a subprocess via forking. Even though this deadlock occurred in C code, we used python-memtools to inspect the raw memory of the process, to see why the thread couldn’t resume.

python-memtools is designed for Python 3.10, but we will add support for newer versions soon. You can try it out yourself. And if you’re interested in working on large-scale systems with us, we’re hiring!